About

A client from International High School approached Cozy Ventures to develop an iOS application that consolidates various educational tools to enhance IELTS preparation. This app is designed to integrate speaking, writing, and grammar practice functionalities into a single platform using AI technologies.

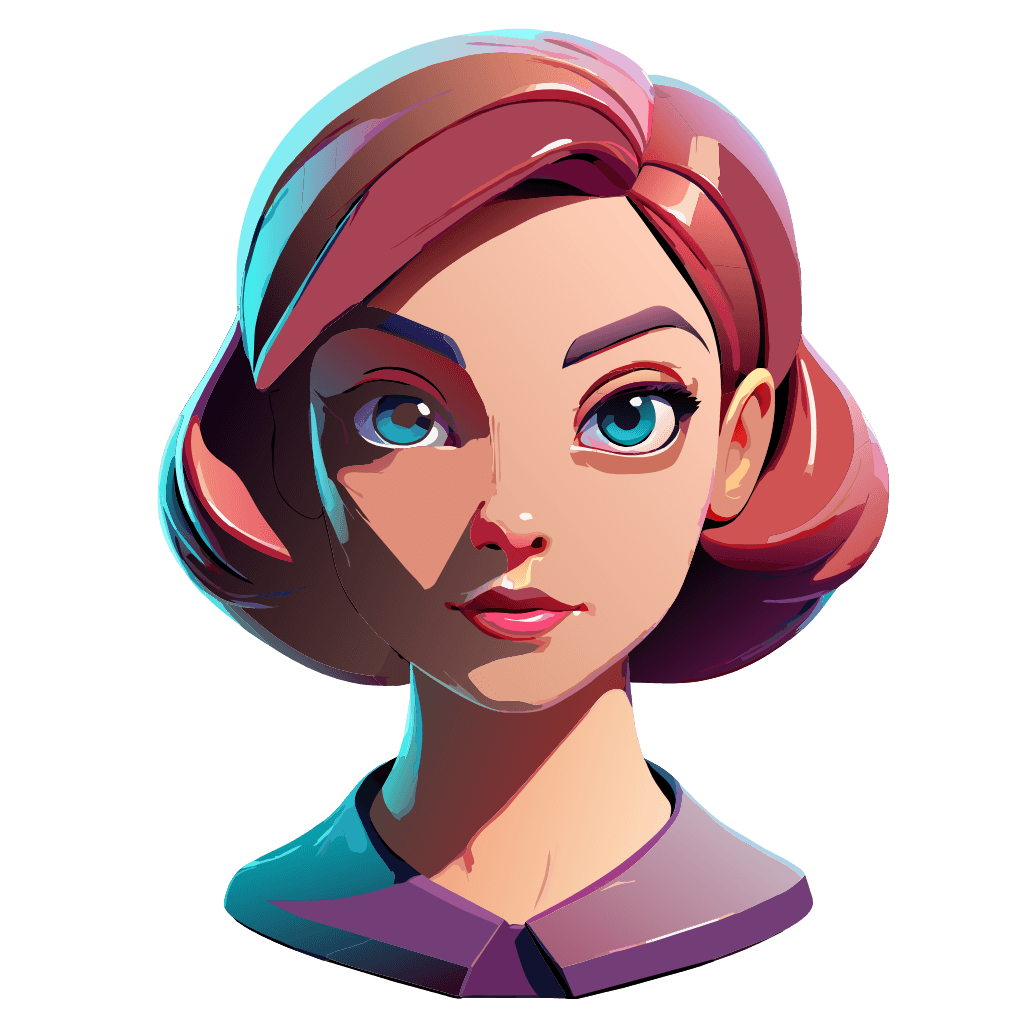

With our expertise in machine learning and large language models (LLMs), Cozy Ventures began feasibility check for an AI-driven 3D speaking avatar. This avatar is designed to interact with users in real-time with high realism, supported by LLM-based lip-sync technologies.

Key Features and Functionalities Implemented

3D Animation with Lip-Sync

- Morphable Model (Fit Me): Fit Me is a 3D model that can be morphed or changed to simulate different facial expressions and lip movements. It relies on a set of predefined facial expressions and phoneme shapes to synchronize lip movements with speech.

- Rigged Models: In animation software like Autodesk Maya and Blender, rigged models use an internal skeleton that animators move to create realistic facial and mouth movements.

- Performance Capture Systems: Systems like Vicon and OptiTrack capture real human movements with cameras and sensors, then apply them to 3D avatars for highly realistic animations, including detailed lip-syncing.

- AI-Driven Models: AI tools like DeepSpeech and Wav2Lip use deep learning to sync lip movements accurately with spoken audio, trained on extensive datasets of speech and facial expressions for natural results.

- Real-Time Animation Software: Some software like Adobe Character Animator and FaceRig allow for real-time puppeteering of 3D avatars with lip-sync. These use webcam input to track facial movements and speech, animating the avatar in real-time.

Available Models of 3D Animation with Lip-Sync

- Computational Power: Delivering high-quality 3D rendering and real-time lip-sync animation demands a lot of computational strength. Even with advancements, smartphones and tablets still don't match the processing power of PCs, particularly in graphics capabilities.

- Battery Life and Overheating: Running complex 3D animations can quickly drain the battery and cause the device to overheat, which is a problem for intensive tasks like advanced lip-sync animations.

- Memory Constraints: Real-time animations and AI-driven models need substantial memory to store temporary data, textures, and models. This limits the complexity of animations that can be smoothly executed on mobile devices.

- AI Model Size: AI models, especially those used for lip-syncing that rely on deep learning, are typically large and require significant computational power to function effectively.

- Latency Issues: In real-time applications, any delay between spoken words and lip movements can negatively affect the user experience, making smooth performance critical.

Obstacles to Using Real-Time 3D Avatars on Smartphones or Tablets

Despite considerable advancements in 3D avatar technology, implementing these sophisticated animations in real-time on mobile devices remains a significant challenge. Future improvements in mobile processor capabilities, AI model optimization, and software efficiency are essential to address these hurdles effectively.

2D Animation with Lip-Sync

The field of 2D avatar animation, particularly focusing on lip synchronization, has evolved significantly thanks to models like "Wav2Lip." This overview discusses the Wav2Lip model, how it's used, and explores real-time solutions, plus its pros and cons.

Overview of Wav2Lip

Wav2Lip is a sophisticated deep learning model specifically developed for precise lip-syncing in videos. It works by taking an audio track and a video clip or a still image of a face, then generating a video where the lips move in perfect sync with the audio. The model employs both a lip-sync discriminator and a visual quality discriminator to ensure the lip movements are realistic and accurately match the audio.

For example, check out this real-time implementation of Wav2Lip: GitHub - Real-Time Wav2Lip

- Model Architecture: Wav2Lip utilizes a convolutional neural network (CNN) design, which is particularly good at processing spatial data such as images and videos.

- Data Input: The model takes in a target face (either a video clip or a still image) and the corresponding audio track as inputs.

- Training Process: It's trained on a large dataset containing video clips and their matching audio, learning to align lip movements closely with spoken words.

- Integration in Real-Time Apps: Wav2Lip can be incorporated into various software platforms or applications for real-time use, using APIs or specific custom integrations tailored to the needs of the platform.

Implementation Details

- Web Applications: Various web tools utilize Wav2Lip or similar models to offer real-time or nearly real-time lip-sync capabilities.

- Mobile Apps: There are also mobile apps that use this technology to create videos with synced audio and video.

- Streaming Software: The model can also be integrated with live streaming software to enhance real-time avatar manipulation and lip-syncing, improving the interactive experience.

Existing Real-Time Solutions

Each of these implementations showcases the flexibility and utility of Wav2Lip in different digital media environments, enhancing how creators can produce dynamic and engaging content.

- Model Complexity: The Wav2Lip model is advanced and complex, making it tricky to fit it into CoreML's framework, which is used in Apple devices. Ensuring the model works well in this new setting while keeping its performance high is a tough task.

- Performance Optimization: CoreML works great on iOS devices, but running a demanding model like Wav2Lip in real-time can strain the device's resources. It might need extra tweaks to run smoothly and accurately without delays.

- Memory and Storage Limits: Mobile devices have less memory and storage than larger computers. The model and its data need to be compact enough to fit these limits without losing efficiency.

- Battery Life Impact: Using deep learning models intensely in real time can quickly drain the battery, which can affect how the device is experienced by users.

- Heat Generation: Using the device's processing power non-stop for real-time tasks can cause it to overheat. This might slow down the device or cause damage over time.

- Latency Issues: For a good experience, it's crucial that the model can sync lips with audio with minimal delay. This means reducing any lag from network or processing time, especially if some computations are done online.

- iOS Compatibility: The model needs to work across various iOS versions and Apple devices, each with different capabilities, which is a challenge.

Challenges with Real-Time Execution

- AccuracyWav2Lip provides highly accurate lip-syncing.

- FlexibilityIt works with both video and static images.

- Application VarietyUseful in various domains like virtual meetings, content creation, and entertainment.

Advantages:

- Computational ResourcesRequires significant computational power for real-time processing..

- Data DependencyPerformance is highly dependent on the quality and diversity of the training data.

- LatencyFor real-time applications, latency can be an issue, depending on the computational resources available.

Disdvantages:

About CoreML

CoreML is Apple's toolkit for integrating machine learning models into apps for iOS, macOS, watchOS, and tvOS. It's designed to be fast and to run models directly on devices, which helps keep user data private and secure. CoreML supports various types of models, including deep learning and more traditional machine learning models.

Why and How to Convert a PyTorch Model to CoreML

- Better Performance: CoreML is tailored to run efficiently on Apple hardware, utilizing advanced chip capabilities for faster processing.

- Privacy and Security: Running models directly on the device keeps the data secure and private since it doesn't need to be sent out to a server.

- Smooth Integration: CoreML models work seamlessly with Apple's development tools, making it easier to build and update apps.

- Energy Efficiency: Processing data on the device uses less energy than sending data to and from a server.

Why Convert?

How to Convert:

- Prepare the PyTorch Model: Make sure the model is well-trained and saved correctly for conversion.

- Use a Conversion Tool: Apple’s coremltools is a Python package that helps convert PyTorch models to CoreML. Start by turning the PyTorch model into the ONNX format, a widely supported format for machine learning models.

- Perform the Conversion: After exporting to ONNX, convert the model to CoreML using coremltools. This may involve adjusting certain settings to make sure the model converts correctly.

- Test and Optimize: Once converted, test the CoreML model on Apple devices to ensure it works as expected. You might need to fine-tune it for better performance.

- App Integration: Finally, integrate the optimized model into an Apple app using Xcode and CoreML tools.

This process allows developers to combine PyTorch's robust training capabilities with CoreML's efficient, device-optimized model execution.

Results

After a lot of research and initial tests, we've set a solid foundation for launching our new app. The technology reviews have really clarified how we can develop further, showing us what the app can do and what we need to do next to fully roll it out.

Want to know more?

If you're interested in similar AI-powered technology, we'd love to hear from you at Cozy Ventures. We're excited about teaming up on educational projects that can change the way we learn. Get in touch to see how we can work together.